You can add a connection to a Hive database using ThoughtSpot DataFlow.

Follow these steps:

-

Click Connections in the top navigation bar.

-

In the Connections interface, click Add connection in the top right corner.

-

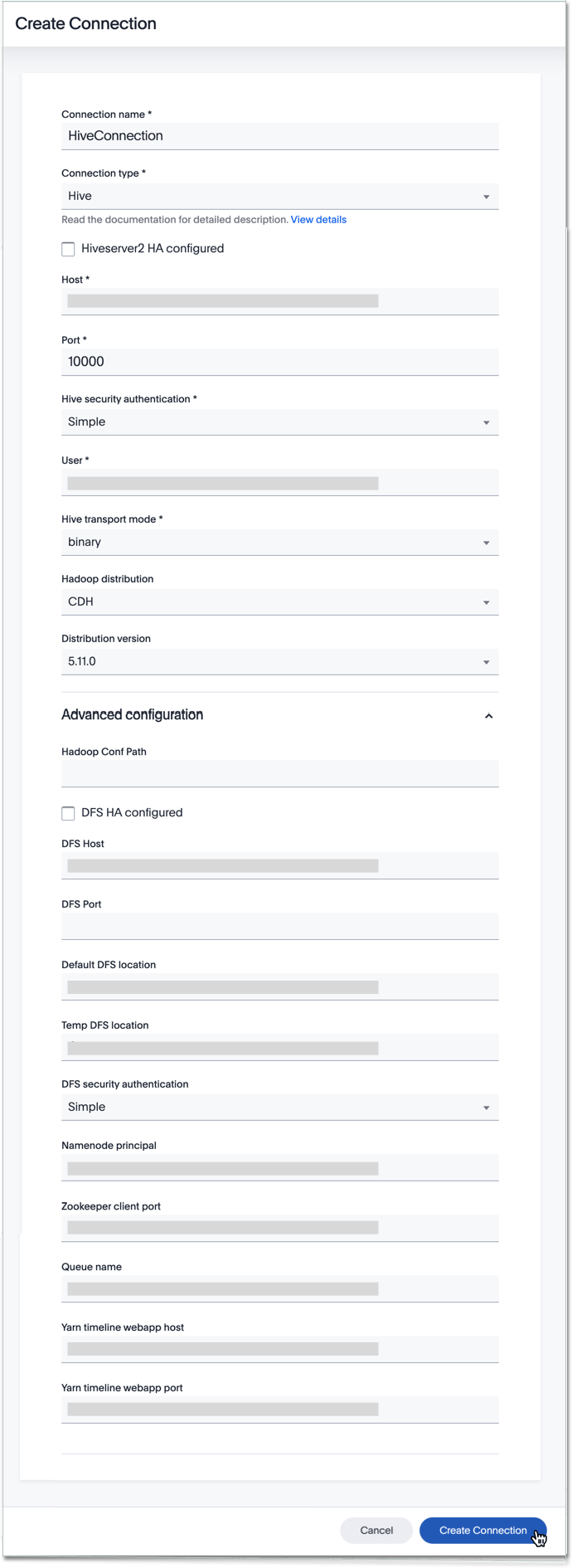

In the Create Connection interface, enter the Connection name, and select the Connection type.

-

After you select the Hive Connection type, the rest of the connection properties appear.

See the Create connection screen for Hive

- Connection name

Name your connection. - Connection type

Choose the Hive connection type. - HiveServer2 HA configured

Specify this option if using HiveServer2 High Availability. - HiveServer2 zookeeper namespace

Specify zookeeper namespace as hivesever2. This is the default value. Only when using Hiveserver2 HA. - Host

Specify the hostname or the IP address of the Hadoop system

Mandatory field. Only when not using Hiveserver2 HA. - Port

Specify the port. Only when not using Hiveserver2 HA. - Hive security authentication

Specifies the type of security protocol to connect to the instance. Based on the type of security select the authentication type and provide details. - User

Specify the user to connect to Hive. This user must have data access privileges. For simple, LDAP, and SSL authentication only. - Trust store

Specify the trust store name for authentication

Mandatory field. For SSL and Kerberos authentication only. - Trust store password

Specify the password for the trust store

Mandatory field. For SSL and Kerberos authentication only. - Hive transport mode

Applicable only for hive process engine. This specifies the network protocol used for communicating between hive nodes.

Mandatory field. - HTTP path

This is specified as an option when http transport mode is selected

Mandatory field. For HTTP transport mode only. - Hadoop distribution

Provide the distribution of Hadoop being connected to

Mandatory field. - Distribution version

Provide the version of the Distribution chosen above

Mandatory field. - Hadoop conf path

By default, the system picks the Hadoop configuration files from the HDFS. To override, specify an alternate location. Applies only when using configuration settings that are different from global Hadoop instance settings. - DFS HA configured

Specify if using High Availability for DFS. For Hadoop Extract only. - DFS name service

Specify the logical name of the HDFS nameservice.

Mandatory field. For DFS HA and Hadoop Extract only. - DFS name node IDs

Specify a comma-separated list of NameNode IDs. System uses this property to determine all NameNodes in the cluster. XML property name isdfs.ha.namenodes.dfs.nameservices. For DFS HA and Hadoop Extract only. - RPC address for namenode1

Specify the fully-qualified RPC address for each listed NameNode. Defined asdfs.namenode.rpc-address.dfs.nameservices.name node ID 1. For DFS HA and Hadoop Extract only. - RPC address for namenode2

Specify the fully-qualified RPC address for each listed NameNode. Define asdfs.namenode.rpc-address.dfs.nameservices.name node ID 2. For DFS HA and Hadoop Extract only. - DFS host

Specify the DFS hostname or the IP address

Mandatory field. For Hadoop Extract only, when not using DFS HA. - DFS port

Specify the associated DFS port

Mandatory field. For Hadoop Extract only, when not using DFS HA. - Default DFS location

Specify the location for the default source/target location

Mandatory field. For Hadoop Extract only. - Temp DFS location

Specify the location for creating temp directory

Mandatory field. For Hadoop Extract only. - DFS security authentication

Select the type of security being enabled

Mandatory field. For Hadoop Extract only. - Hadoop RPC protection

Hadoop cluster administrators control the quality of protection using the configuration parameter hadoop.rpc.protection

Mandatory field. When using Kerberos DFS security authentication and Hadoop Extract. - Hive principal

Principal for authenticating hive services

Mandatory field. - User principal

To authenticate via a key-tab you must have supporting key-tab file which is generated by Kerberos Admin and also requires the user principal associated with Key-tab ( Configured while enabling Kerberos)

Mandatory field. - User keytab

To authenticate via a key-tab you must have supporting key-tab file which is generated by Kerberos Admin and also requires the user principal associated with Key-tab ( Configured while enabling Kerberos)

Mandatory field. - KDC host

Specify KDC Host Name where as KDC (Kerberos Key Distribution Center) is a service than runs on a domain controller server role (Configured from Kerbores configuration-/etc/krb5.conf )

Mandatory field. - Default realm

A Kerberos realm is the domain over which a Kerberos authentication server has the authority to authenticate a user, host or service (Configured from Kerbores configuration-/etc/krb5.conf )

Mandatory field. - Queue name

Specify the queue name followed by a coma separated form in yarn.scheduler.capacity.root.queues.

Mandatory field. For Hadoop Extract only. - YARN web UI port

Yarn Providing web UI for yarn RM and by default 8088 in use

Mandatory field. For Hadoop Extract only. - Zookeeper quorum host

Specify the value of hadoop.registry.zk.quorum from yarn-site.xml

Mandatory field. Only when not using Hiveserver2 HA. - Yarn timeline webapp host

Specify the ip adress of yarn timeline service web application

Mandatory field. - Yarn timeline webapp port

Specify the port associated with the yarn timeline service web application

Mandatory field. - Yarn timeline webapp version

Specify the version associated with the yarn timeline service web application

Mandatory field.

See Connection properties for details, defaults, and examples.

- Connection name

-

Click Create connection.